Analysis

CA120: A rocky road for down-ballot propositions

Illustration by Tim Foster, Capitol Weekly

Illustration by Tim Foster, Capitol WeeklyAs Capitol Weekly reported today, the November ballot is growing with seven measures already ready for the ballot, and another 66 in the wings. Most won’t qualify, so there is little reason to fear a 48-measure ballot like California saw in 1914. But we could near or exceed the modern high water mark of 29 on the 1988 Primary Election Ballot, and we will definitely exceed the average of 8.5 measures per ballot since 2000.

When and how ballot measures are put before voters has been controversial in California since their inception.

In 2011, the Legislature moved all citizen-generated ballot initiatives to the General Election. This was done, ostensibly, to ensure that voters who are deciding on these big issues of statewide importance are doing so in an election with higher, and therefore more representative, turnout.

In doing so, the Legislature has loaded the General Election with what should be an increasing number of measures. Based on recent history, our projections show that instead of 8.5 ballot measures on each of two successive ballots, we should see an average of 17 measures on the single November ballot.

The results are fairly clear and consistent: In at least two ways, being further down the ballot harms ballot measures.

This year’s long ballot, and the prospect of seeing a dozen or so measures each cycle, brings up an often repeated fear that voters, fatigued by the length of the ballot and complexity of measures, will reach a breaking point. Anecdotally, we hear stories of voters who stop voting on measures, or who, in frustration, start voting no on everything. It’s as if they are protesting the number of measures they’re facing, rather than judging each measure by its merits.

While anecdotes are nice, we have many years’ worth of data from which we can observe patterns and determine if longer ballots do, in fact, have a negative impact on measures.

Collecting data from the Secretary of State’s Office, we have built a database of total votes cast, the number of votes for and against each ballot measure, and the success or failure of each since 2000.

And the results are fairly clear and consistent: In at least two ways, being further down the ballot harms ballot measures.

In campaigns we are very familiar with the struggle to get voters to the polls. But, once they get there, we treat it as a given that they are going to diligently cast their votes. Yet, every year there is a small difference between the number of voters in a precinct, and the total votes cast for each individual measure. In election official parlance, these are “undervotes” and they occur when a race on a ballot, or an entire ballot, are left blank.

If the 2016 ballot has 20 measures, we would expect the first one to have around a 6% undervote, then each successive measure would lose a bit more and more, on average losing one percent of voters for every four spots down the ballot.

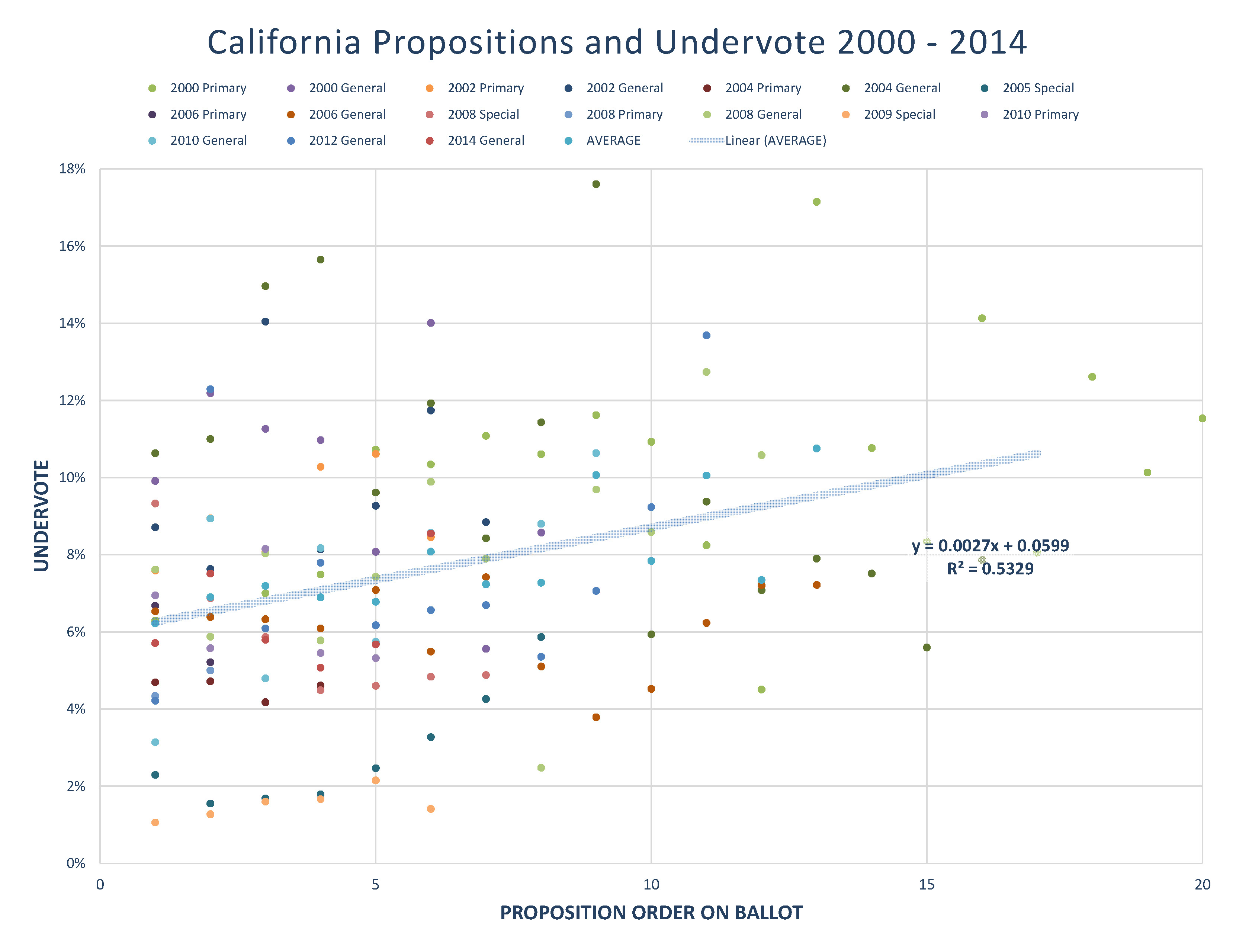

In order to identify the undervote, and the extent to which the undervote is linked to the order that a measure appears on the ballot, we have laid out each of the past 17 statewide elections and created a simple scatterplot for each ballot’s measures by the order they appeared.

From this, we were able to create a trend line which plots along the path of the average undervote across ballots. It can be seen in the graphic above that average undervote for measures in the first position was 6%, where the average undervote for the 10th measure exceeds 8%.

Going further into our analysis toolbox, we can actually establish a mathematical formula for this line in the old high school algebraic Y=Mx+B, where M is the slope of the line and B is the intercept, or starting point.

So, what does this math tell us?

This scatterplot and formula, called a simple linear regression, shows that there is a positive relationship between these two variables: There is an upward trend of a bit more than a quarter-point in undervote as the proposition moves each spot further down the ballot.

Something like Marijuana Legalization, even at the end of the ballot, may still grab the attention of a voter who would otherwise skip the late measures.

If the 2016 ballot has 20 measures, we would expect the first one to have around a 6% undervote, then each successive measure would lose a bit more and more, on average losing one percent of voters for every four spots down the ballot. A measure at the tail end of the ballot could be ignored by 11% of the voters who actually showed up for the election.

This data does come with some caveats. Like other attempts to take a fluctuating event and place it on a linear path, this is just an approximation. And while we can see a general relationship, the fact is that voters are probably doing a bit of hunting and pecking as well. Voters may look for some propositions they know of, and avoid others.

For example, in 2004 a ballot measure on local government finance (Prop 65) suffered from a whopping 18% undervote, likely because it seems so ambiguous and wonky. That snoozer was followed by the higher salience and controversial Three-Strikes revision (Prop 65) which only had 6% undervote. This suggests that voter fatigue may be more sporadic than a straight line suggests. Something like Marijuana Legalization, even at the end of the ballot, may still grab the attention of a voter who would otherwise skip the late measures.

But, for campaigns, voters skipping out may not be the greatest concern. Ballot measures are still only decided by the yesses and no’s, with these undervotes just being missed opportunities for support. However, it’s when we get to the votes that are getting cast that things get much worse, fast.

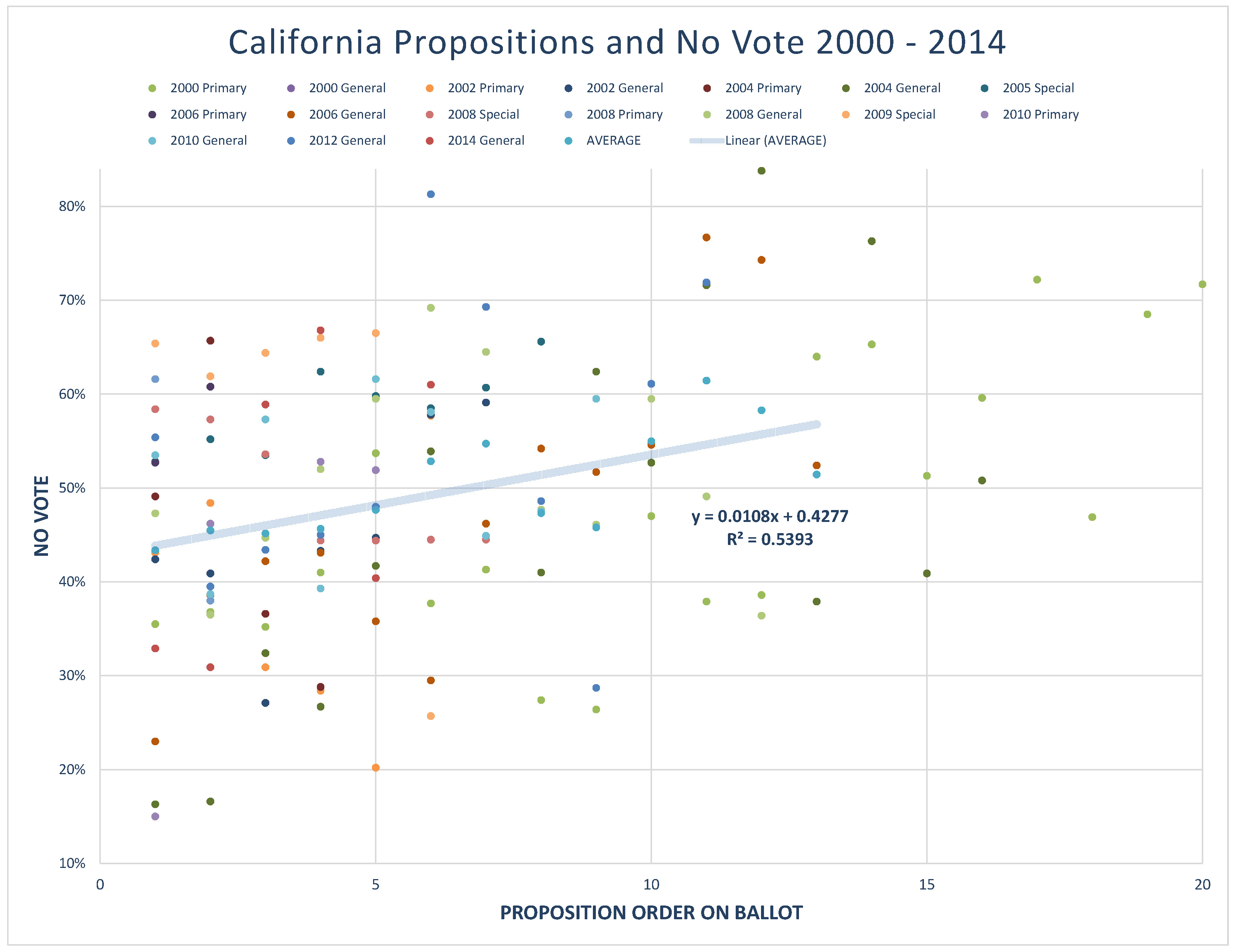

Using the same tools as above, but now looking at the percentage of “No” vote for the 142 measures since 2000, a strong positive relationship is seen between the order on the ballot and the vote outcome.

This time, the trend line gives us a starting point for most measures at 42% No, 58% Yes, and passing, in the first position. But by the 10th spot we have a trend line that shows an average of 52% No, 48% Yes, and failing. The formula gives us a relationship between ballot order and No votes of approximately one percentage point for each step down the ballot.

If this relationship is true, this coming November, the 20th ballot measure would essentially have a 20-point anchor tied to it.

If you were to eschew the fancy math and just look at the success and failure of ballot measures at the top and bottom of the ballot, you see the same trend. Looking at the top 16 spots, the measures in the first 8 positions on the ballot have passed 58% of the time, where those in positions 9-16 have a passage rate of only 34%.

For campaigns, this is a potential killer, and one that would be unlikely for a campaign to see just looking at their research.

Voter surveys will generally poll a single ballot measure, or a couple related measures being supported by an organization or committee, not 20. The results of a single-measure poll could be off by double digits from the actual likely outcome given this drag.

And surveys are not going to be good at filtering out this percentage. Surveyors rarely have time to read a voter all 20 measures in a telephone survey, and they wouldn’t be stopping each time to ask the voter, “Do you feel like voting No on everything else yet?”

But, again, this data comes with a big caveat. The regression shows a high negative correlation between the ballot order and passage, but not necessarily causation. Could there be another reason that these ballot measures later down the ballot are failing at a higher rate?

It is very possible that since ballot measures are placed on the ballot in the order in which they qualify, the measures with greater funding, well-practiced consultants, better poll testing, and more support in the signature-gathering phase, are first through the gate, and the measures that take more time, or are in more disarray, or harder to collect signatures for, are later on the ballot.

There’s also the fact that referendums are put at the end of the ballot, and bond and constitutional measures come up first, maybe because the Legislature realized that there was a benefit to being on top. However, analysis looking just at the citizen initiatives shows the same result.

Could something be done about this? As we know, each election cycle candidates are placed on the ballot according to a randomized alphabet drawn by the Secretary of State, and that randomization is rotated one letter for each Assembly District down the state, ensuring that no candidates get the benefit of being in the top spot on every voter’s ballot. A similar thing could be done with ballot measures if lawmakers find that the negative trend associated with ballot order is distorting the outcomes of a democratic process.

—

Ed’s Note: Paul Mitchell, a regular contributor to Capitol Weekly, is vice president of Political Data Inc., and owner of Redistricting Partners, a political strategy firm. This is the latest in a series of data-driven articles examining critical California issues in 2016.

Want to see more stories like this? Sign up for The Roundup, the free daily newsletter about California politics from the editors of Capitol Weekly. Stay up to date on the news you need to know.

Sign up below, then look for a confirmation email in your inbox.

This should really shake up campaign consultants. It would be interesting to see if you get any responses from these folks.

Agree wholeheartedly with the diagnosis. Excellent analysis. Disagree with the prescription.

[…] electoral power of white partisan voters, the importance of modeling to big campaigns, the challenge of down-ballot propositions, the role of abstention in open primaries, the impact of ballot order on intra-party general […]